for more essays on the Realm of the Circuit visit www.metaforas.org

Published in The Education of an E-Designer, Stephen Heller, ed. Allworth Press, 2001 As members of a thinking community, we must accept this premise: we are no longer anticipating a revolution. It has already happened. It is time to build on its promise, transcend the inevitable losses, and become more comfortable, more human, with the change now wrought.

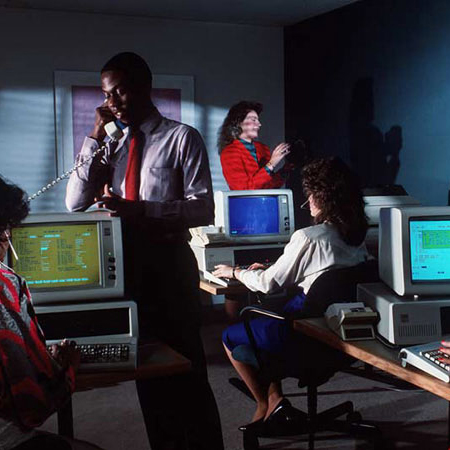

This revolution has created the possibility of reinventing ways lost in history of interacting, thinking and creating. This is manifest in the advent of the digital computer, and its accompanying methodologies, giving unprecedented new opportunities for working in ways that emphasize relationships between bodies of knowledge and human minds. The computer is valuable in is ability to enable us to reconceptualize our relationship to knowledge, and to organize it, rather than merely accumulate information. The methodologies of the computer allow us to share a commonality of human expression that crosses disciplines. If approached openly by thinking people who hold the humanist tradition dear, they allow a means for creativity which will enable us to reinforce that which makes us human. The great achievements of man lie in the quest to expose the unseen, and the computer’s value lies in its ability to further these achievements.

The ways of working in the digital world, however, are not new, as we shall see. Indeed, precursors of multimedia and hypertext have been around for centuries. The present strength of the computer, its speed, flexibility, and strength in retention of fact, only enhance what has already been embedded in the constant course of human intelligence—the desire to create new meanings through relationship.

We posit a new creative individual, the “creative interlocutor,” a navigator of associative trails of thought and resource, who enables others to freely and creatively manage their human interests. This individual is one who is integrated: his creativity functions as an organic part of society, and he acts to connect for the common good. The creative interlocutor is also an integrator in his ability to negotiate the disparate fields of human knowledge and bring them together in previously unimagined ways. In so doing, he enables others to further their creative potentials.

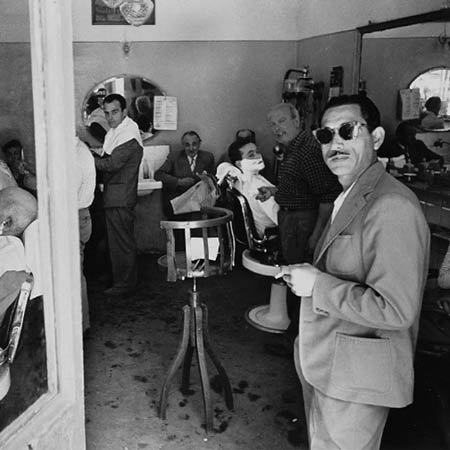

Herein we will make the case that technology has always aided, rather than hindered, human expression and creativity. Human beings, however, have always had to overcome an initial hesitancy, whether it be telegraph or the computer. Henry David Thoreau remarked: “We are in a great haste to construct a telegraph from Maine to Texas. But Maine and Texas, it may be, have nothing important to communicate.” Clearly, today one does not doubt the humanity of a grandmother in Maine who talks to her granddaughter in Texas. What we lament is the loss of content in that conversation.

We seek to negate the self-fulfilling prophecy engendered by entrenching interests that lament the loss of their primacy by blaming the inhumanity of the technology. You can’t touch it, you can’t read in bed, it hurts my eyes, and so forth. These regrets and fears, like all others, are inhibiting. All too often they segregate the minds of humanists and artists whose creative input is vitally needed in the implementation of this new technology. The irony is that this feeling unnecessarily reinforces the power of the technocrats who then direct the design and implementation of the technology in a self-promoting way. Ask not what the computer can do for you, but what you can do for the computer.

HUMANISM AND THE LIBERAL ARTS

The computer has value only as it enhances that which makes us human. Most likely this is our ability to learn, or rather to learn how to learn ¬ the knack to order, manage and reconfigure that which we know. Our humanity lies in our ability to transmit from one another, allowing others to gain access to successful formulations and articulations that further our notion of being. This what builds culture ¬ the accumulated conceptual riches brought through the history of civilization.

We use the Liberal Arts1 to understand these riches. They treat the fields of knowledge in a balanced and equal manner, emphasizing the commonality of human experience and its expression within its diverse fields. A student of the liberal arts creates meaning by weaving a nurturing blanket from the common threads that hold the fields together, rather than by focusing on the seams which set them apart. This balance between fields of knowledge, and search for commonality is precisely what is furthered by a judicious use of multimedia digital technology.

Thinkers of the Enlightenment rediscovered patterns of thinking that today are embodied in the technology. Francis Bacon followed the Renaissance masters as a model of the creative interlocutor, connecting the spirit of the Enlightenment with the great Age of Reason. Through his methodology of inductive reasoning, he sought to free intelligence from dogma that constrained and limited our understanding of the greater rational scheme of the world. In NovumOrganum in 1623, he argues not only for scientific methodology, but also for its integration with the arts and the humanities. In inductive reasoning, which is the accumulation of information and the detection of patterns therein, is the commonality of procedures that dispels notions of a priori preconception. His philosophies opened the field of human inquiry to an ever-expanding body of knowledge. Francis Bacon’s life, rooted in philosophy, politics, and the creative art of writing is exemplary of methodological inquiry furthering the connectedness of our human interest.

Maria SibyllaMerian (1647-1717)2 was the visual arts analogue to Bacon. Through her use of the evolving technologies of optical magnification and mechanical reproduction, she was able to further humanist values and the ideals of the Enlightenment. Born to a family of bookmakers, she took at an early age to observing and sketching insects. She would take the observational skills learned as a child and go on to publish two major works: Raupen and Metamorphosis, both editions of copperplate prints. In these works, she depicts the insect and plant life of Europe and Surinam to the emerging intellectual class of the period. Merian was unique among botanists of her age. She depicted insects and plants not as specimens, but rather as creatures intricately and intimately involved in the cycle of life. She was not interested in then conventional classification schemes or in “cabinets of wonder” that present sterile specimens. In fact, she told one potential collaborator to stop sending her dead insects ¬ she was only interested in “the formation, propagation and metamorphosis of creatures.”3 Prior to the seventeenth century, our understanding of the world was formed by a combination of myth and doctrine. During the Enlightenment, the West found a new fascination with the real, and developed ways of thinking and technology to explore the world. Merian was inspired by the new optical technologies of her time; the compound microscope came about in the 1660’s, and Althanasius Kirchner published his book Ars manga lucisetubmrae, which discussed the camera obscura as a tool for observation and illustration. In her imaginative use of these tools, Merian was an artist who responded to Enlightenment discourse about knowledge and the natural world, and effortlessly crossed boundaries. The fruits of scientific methodology fathered by such as Bacon, Merian and the great thinkers of the Age of Reason brought forth the Industrial Age. In this new age, the ever-expanding fields of knowledge required specialization at the expense of more universally learned individuals.

CROSS FERTILIZATION

The idea that one field might enrich another is also not a new one. Though it seems to be forgotten by the over-specialization emphasized in our learning institutions, the concept and practice of what is currently termed multimedia is an age-old notion. Multimedia is not suggested merely by technological advancement, but rather it is grounded in fundamental human practice that predates the invention of the computer by thousands of years. Early uses of multimedia were cross disciplinary in an unselfconscious way. The advent of the computer did not create the technical tangle of multimedia, but rather manifests a pre-existing need in our culture for a more democratic, universal and diverse way to communicate.

We can see multimedia in the burial rituals of the ancient Egyptians who made no demarcation between media employed in the great technology of the pyramids and their elaborate burial rituals. These burial sites combined elements of architecture, writing, sculpture, and during the rite, even music and performance, all for the purpose of captivating and mystifying the laity under the dominance of their rulers. In the Middle Ages, the prevalent form of multimedia was at the same time a form of mass communication. The cathedral communicated the awe-inspiring Christian spiritual doctrine which was the dominant means of rationalizing human existence. The message was made stronger by its embodiment in a variety media stimulating the senses: visual (stained glass and statues), sound (music and hymn), touch and taste (performance and mass), and smell (incense and myrrh). Writing itself was the means for codifying the knowledge held in the cathedral, the knowledge to sort out the patterns of our existence, to know the unknowable.

All of these technologies were beyond the reach of the ordinary man, since books were tremendously expensive to produce and few could read. The expense and duration of constructing a cathedral made it an option only for the wealthy. It was of course not portable, so it remained in a central location, accessible only to those in its immediate vicinity. Due to these inherent, and perhaps intentional, constraints, knowledge and, thus, power were concentrated in the hands of the theocracy. It was not until the advent of the printed book that the quest for knowledge could become a part of a universally inclusive culture. Yet, printing came with a price: a devaluation of multimodal communication.

Victor Hugo comments further on the advent of printing, its narrowing of our field of expression, and the dominance of the word over the image in his nineteenth-century novel The Hunchback of Nôtre Dame. A character in his novel, a priest in fifteenth-century France, directly after the invention of movable type, compares the newly invented book to the cathedral and states, “this will kill that” ¬ the book will kill the cathedral. Yet, it did not. Hugo’s phrase also refers to the conflict between the text of the book and the multimedia imagery of the church. By the nineteenth century, the text had become dominant as a means of discourse. For a century to follow, the word, through the great dissemination of the written text, was the primary source for creative inspiration. If nothing else, it allowed for the distribution of description pornography and a stimulation that gave rise to Modernism.

But all was short-lived. In the twentieth century, Marshall McLuhan, in his book The Gutenberg Galaxy, foresaw the rise of the image, empowered by global visual media such as television. He envisioned “the civilization of imagery” wherein the word is no longer the sole stimulating force in the imagination. Today there is an unanswerable conundrum ¬ which is it that stimulates the imagination first or more, the word or the image? The computer doesn’t care, because it’s a multimedia cathedral!

Predictably, the phrase “this will kill that” was repeated with the invention of photography, and is all too often heard again today as we experience the digital revolution. Much in the same way that the text of the book threatened the mulimodal cathedral, or photography’s imagery that of painting, the computer now threatens the book. Likely, there will be a co-existence in the media. The book will likely not disappear, but will inevitably change in function and meaning, as did painting. Furthermore, the computer offers us another Renaissance in our extensions of creative possibilities through the coequal distribution and interconnectedness of age-old multimedia. The Web is an ever-expanding territory of thought, commerce, and entertainment.

Obviously, there is no doubt that technology relieves us of burdensome tasks, whether it is the welding of metal or of numbers or of images, or of all of them together. But have we allowed it to free us in the greater pursuits of our humanness? Perhaps the blame lies not with technology but with our systems of learning.

All too often today, intellectual ideas are treated as chattel property whose purpose remains locked in the discourse of the “knowing” rather than serving the common good. This notion segregates us from our commonality of intelligence and unravels with technobabble and jargonization the very fiber of our humanity. Pre-Enlightenment myth returns to these forms. Specialists sequester themselves in monasteries of learning, untouched by the great unwashed masses. Something medieval is happening again.

Is it not astounding that at Harvard University, as recently as 1989, the late great Italian poet Italo Calvino needed to remind his audience of what should have been evident in the liberal arts ideal: Creative visualization is a process that, while not “originating in the heavens,” goes beyond any specific knowledge or intention of the individual to form a kind of transcendence. Calvino stated that not only poets and novelists deal with this problem, but scientists as well. “To draw on the gulf of potential multiplicity is indispensable to any form of knowledge. The poet’s mind, and at a few decisive moments the mind of the scientist, works according to a process of association of images that is the quickest way to link and to choose between the infinite forms of the possible and the impossible. The imagination is a kind of electronic machine that takes account of all possible combinations and chooses the ones that are appropriate to a particular purpose, or simply the most interesting, pleasing, or amusing.”4

JOHN DEWEY: PRAGMATIC VISIONARY

Earlier in the twentieth century, John Dewey, in his pragmatism, advocated an educational system which would recognize the common humanist thread within experience, communication, and art. In his analysis of the Greek Parthenon he noted:

The collective life knew no boundaries between what was characteristic of these places and operations and the arts that brought color, grace and dignity into them. Painting and sculpture were organically one with architecture, as that was one with the social purpose the buildings served. Music and song were intimate parts of the rites and ceremonies in which the meaning of group life was consummated.5

We ought not to have to remind today’s thinkers of his philosophies, and yet find we have to over and over again. Dewey sought to recover the continuity of aesthetic experience and normal processes of living through proper education. All art is the product of interaction of living organism and environment and an undergoing and a doing which involves a reorganization of actions and materials.6Aesthetic understanding must start with and never forget that the roots of art and beauty lie in basic vital functions. Herein is a mimic of Bacon’s earlier notion that all pattern is of the “machine of God.”

Marvin Minsky, one of the founders of modern computer science, in a like manner has portrayed the mind as a society of tiny components forming a magnificent puzzle of evolving imagination. In his book The Society of Mind, he cites Papert’s principle, the notion proposed by Seymour Papert regarding mental growth, wherein Papert theorized that intellectual progress is based not simply on the acquisition of new skills, but also on the acquisition of new administrative ways to use what one already knows.7 Our conception of the computer as an art-making and communication device is just that—a tool which fosters and encourages the creative re-administration of information.

Dewey envisioned an educational system which imparted pragmatic information without elitism. In order to allow education to become a tool that enables humanity to cultivate and reorganize our work and culture, we must abandon authoritarian methods of educational practice, where the teacher is the endowed disseminator of privileged knowledge. Humanists must remember that the computer is a tool of multimedia communication between the source of information and the user, without giving authority to the selected few. This communication becomes an ongoing ebb and flow of escalating meaning/communication which engages and empowers the inquisitive user. As a tool for art-making and scientific thinking, it is unique, and allows us the potential to realize Dewey’s vision. More than at any other time in history, it is important to educate students with tools, both technical and intellectual, to formulate new patterns between the details of knowledge rather than to expect them to accumulate information like books on a shelf.8 Cyber communication must be made to be the intelligent extension of human capability for new discovery. Communication is education.

DATASET: ALL ART IS IMAGE

Whether communication takes the form of vocal utterances, ink on paper, or modulation of radio waves, the intention has always been the transfer of meaning form one individual to another. This creates an image that will convey idea. It is in our humanism that we attempt to make manifest some facet of experience/content and communicate it to another person or persons.9

Until now, the medium has determined both the audience for the message and its destination. Thus, oil paintings were destined for the museum, text for the printed page, music for the radio. Subcultures have grown up around these destinations, and these subcultures have become insular and self-referential. Yet the separations are artificial, imposed by the restraints of the technology and mostly by the lack of vision of those working within politically defined fields. These boundaries between media also forced a separation of audiences, creating the artificial divides of high and low culture. Evolution of media allows an evolution of audience. With its virtual writing spaces,10 the computer positions us to transcend these restraints, and to reunite all experience, within its algorithms, to recognize the common humanism within all communication.

The digital computer when combined with the optical scanner, the music sampler and a myriad of other computer input devices, allows us to reduce all physical media to a virtual binary digit. At this point, when we have digitized sound, or photographs, or film, it is all equal in the cathedral-like space of the computer, without dogma. Images become reduced to a dataset ¬ nothing more, nothing less. Every digital movie, every digital image, every digital sound is nothing more than a sequence of zeros and ones stored in the memory of the computer. These numbers can now be seamlessly combined and juxtaposed. In the computer’s virtual spaces, all forms of communication are equal.

The computer, in its use of multimedia, merely reinforces common and historic themes. In order to communicate in the interest of evolving the human condition, there must be access to the creative tools ¬ the computer network ¬ to all interested. The computer has the ability to structure all communication to the common and accessible level implied within the language of the dataset. Hence, it empowers the user to also reorganize any message in new ways that allow for pattern thinking, trans-disciplinary intercourse, and the visualization of the unseen.

THE MEMEX’S ASSOCIATIVE INDEX

The idea of making a large body of information available to others is not new. In 350 B.C., the Athenian Speusippus created an encyclopedia that purported to contain all human knowledge, as did Lu Pu-Wei in China in 239 B.C., who gathered 3,000 known scholars and assembled their knowledge into a work of more than 200,000 words. One of the limitations of these encyclopedias was their mass: Pliny the Elder’s encyclopedia, Natural History, compiled in A.D. 79, was said to comprise thirty-seven volumes containing 2,5000 chapters. The next limitation was cost: At a time when books were reproduced by hand, works of this magnitude were fabulously expensive. The final limitation was more of a cognitive one: when large bodies of information are put together, some organizational scheme must be used. Modern encyclopedias are organized more or less alphabetically, with one entry following another from a to z. This is a fairly arbitrary modern, and limiting system. Diderot’s Encyclopédie, a text meant to further the Enlightenment by bringing out the essential principles of art and science, was organized by tasks and preoccupations.

Vannevar Bush, science advisor to Franklin Delano Roosevelt, has been somewhat forgotten, yet stands as a remarkable creative interlocutor. In his 1945 vision of the memex, he held out the solutions for the limitations of human mind and dexterity. The memex was an unrealized tool that a more enlightened harvard audience, listening to Calvino, might already have employed. His machine improves memory, like an encyclopedia, while allowing the mind to operate “by association. With one item in its grasp, it snaps instantly to the next that is suggested by its association of thoughts.”11 His vision of its ability to scan information allows the user to recombine art and knowledge, to become a creative interlocutor.

He talked of new organizational schemes ¬ ones which can be customized to the needs and interests of the particular users. His device combines two of the liberating capabilities of the digital computer; reduction of images, words, and music to a dataset and networking in what was meant to be a personal device. He foresaw both the internal network of hypertext and the possibilities of the external network.

Remarkably, today Bush’s mechanism is as common as the desktop computer, and yet, his essential idea of the memex¬ that users can be empowered by hypertextual trails through information ¬ is unfulfilled. Why, we ask? It is not the fault of technology, but rather a failure of entrenched values and limited vision. The Web, the most prevalent implementation of hypertext, is essentially a one-way distribution system, where the user has little facility to be creative. We foresee the use of the computer networks to facilitate and empower the creative interlocutor.

The creative interlocutor uses hypertext and hypermedia to create trails; these trails transform data into knowledge to be redistributed to others, thus feeding the network. The memex, and, likewise, the computer create a miniature network within the data they hold in their memory. When linked to a larger network, such as the World Wide Web, their ability to create new meaning is increased almost infinitely.

Our ability to nurture and engage our own genius is stifled by an education that fails to recognize the value of associative capabilities inherent in this network. Clearly, this is the task of the redefined humanist and visual education, or what we once referred to as the classic Liberal Arts. It must engage us all, as scientists, engineers, artists, and scholars. Technology has failed us in accomplishing this goal because it has been segregated from humanist activity.

THE COMPUTER AND THE CREATIVE INTERLOCUTOR

A new artist, interlocutor-designer should be a product of an enlightened engagement fostered by a new educational system which is trans-disciplinary in nature.12 The creative interlocutor is one who facilitates the exchange of ideas and information between one human need and another. This person is the producer, director, the organizer-navigator. More specifically, this person is the curator, editor and collector, then the maker, weaver, welder, builder and distributor. History reminds us easily of such as Leonardo da Vinci Frances Bacon and Thomas Jefferson. But one must also ponder th great stretches by multidisciplinary minds such as the weavers of the Bayeux tapestry, Anna SibyllaMerian, Samuel F. B. Morse, the Roeblings, Booker T. Washington, Laszlo Moholy-Nagy and countless others whose reach across boundaries changed civilization for the better. Creative intelocutors are: programmers, producers, inventors, researchers, teachers, scholars and volunteers. The creative interlocutor negotiates revolutionary associations, a kind of new genius.

We see a budding of the creative interlocutor in the collaborative spaces of the Internet. The language of the computer is a shared language that allows participation by those who so choose. In the examples to follow, there is not longer a single creator, but rather a collective genius, a web of creative nodes that weave together previously disconnected pieces of information.13 As innovators, creative interlocutors use their art in a manner which facilitates others to find and define their own creative meaning in the interrelationship of ideas and forms.

In 1979, the inventors of the RSA encryption scheme (the one currently used by Netscape Navigator) put forward a challenge.14 They encoded a message, and offered a $100 reward to anyone who could crack it. They felt that given the computing resources of the time, and even granted advances in chip speed with a factor of millions, nobody would be able to break their code in the foreseeable future. They were wrong! Instead of thinking of a single computer as a self-contained and limited system, Derek Atkins, a twenty-one-year-old engineering student at MIT, realized that while one computer would take a long time to crack the code, he might harness the power of the Internet and distribute the computing load over many computers. And that’s exactly what he did, In 1991 he directed his friends to use a recently discovered mathematical method to devise a program that would crack the code, and had it ready to go by mid-1993. The program was distributed over the Internet to more than 1,500 computers on six continents to create an expanded computer that churned out 5,000 MIPS15 years. The code was cracked in the spring of 1994 by looking beyond the boundary of the individual computer and thus consolidating the power of the network.

Another example of imaginative administration to enlarge our sphere of possibilities lies in the development of a computer operating system. The operating system Linux was not so much invented, as evolved through creative re-administration. It is a prime example of the networked aesthetic of the expanded public sphere of individuals working in concert. It began with Linus Torvalds. He was in school in 1990, and owned a PC that ran Minux, an operating system designed mostly as a UNIX tutorial. UNIX is a powerful operating system, which at the time, could only be run on more powerful computers. So, he imaginatively worked within his limitations, and wrote a few programs ¬ a terminal emulator and a disk driver ¬ so that he could save files to disk. He posted these initial programs, and generously shared them as freeware ¬ software that is distributed primarily over the Internet, and for which there is no charge. From there it took off. As a result of Torvald’s interlocution, the operating system evolved in a democratic and Darwinian manner; anyone could contribute code to he operating system, but only the most evolved would become part and parcel of the final release version. He presided over the development, but was by no means entirely in control of it. He provided the seed idea and the guidance, but left the mechanics of its development up to the community of creative users. Today, Linux has an established base of nearly ten million users in 120 countries and is composed of millions of lines of code. All of this primarily because Linus didn’t follow the usual notion of creating software through the confines of a defined proprietary scheme ¬ hundreds of hackers around the world wrote it, collaborating over the Internet.

These examples serve to help define the notion of the “creative interlocutor”, a multimedia universal designer, engineer, artist, socially responsible person whose mandate is to help negotiate the crossing of boundaries. While they reside in the field of technology itself, their parallels must be generated within the humanities and arts.

1 Beginning in the Middle Ages, the traditional fields of the liberal arts were defined from classical studies in order to reveal obscured meaning in the text, symbols, doctrines, icons and mysticism of the Church. The ancient seven branches of learning included grammar, logic, rhetoric, arithmetic, geometry, music and astronomy. All were means of deciphering the hidden codes of the Biblical text. Since the Renaissance and its enlightenment, the core of this traditional education held that all area of human endeavor are suitable topics for inquiry, regardless of their nominal concerns. An integrated individual versed in the liberal arts loves learning and is directed by intellectual curiosity, rather than by disciplinary guidelines. The Renaissance dawn from the a priori methods of the Dark Ages revealed the various facets of diverse fields and the common rays of humanism's enlightenment. Educators such as Vittorino de Feltre of Mantua taught men to be well-rounded individuals. In his boarding schools, princes and poor scholars mixed in a classical education. Character was shaped, along with the mind and body, through frugal living, self-discipline, and a high sense of social obligation. All was done with an eye to the practical: philosophy was a guide to the art of living, along with training for public life. "Students were expected to excel in all human existence." 2 Natalie Zemon Davis, Women on the Margins (Cambridge: Harvard University Pres, 1995). 3 Davis, 181. 4 Italo Calvino, Six Memos for the Next Millennium (Cambridge: Harvard University Press, 1988): 87-91. 5 John Dewey, Art as Experience (New York: Perigree Books, 1934, 1980):7. 6 Dewey, 16. 7 Marvin Minsky, The Society of Mind (New York: Simon and Schuster, 1985) 8 Johann Wolfgang von Goethe, the nineteenth-century thinker, is said to have been the last man to have known everything. This fact was remarkable for two reasons: first, there was much less accumulated human knowledge at that time, and second, Goethe's capacity to retain even this. Today, the genius of Goethe is suplanted by the convenience of the ability to communicate to all knowledge. 9 "As a photographer, I don't caer about photography, and have always been irritated with those who are concerned exclusively with f-stop and stop bath. For me, it is the communcation of idea to another that holds the true excitement. Photography is merely a means to an end, and if I could achieve that end in another way, I certainly would." Jonathan Lipkin (1993) One Family's Journey, MFA Thesis, School of Visual Arts, New York. 10 Jay Bolter, Writing Spaces (Hillsdale: Lawrence Erbaum Associates, 1991). Here Bolter traces the history of the effects of technology on writing. He discusses the book, the scroll, the pictographic and logographic alphabets. The computer is seen as merely the next step in long series of technological advances that interact with the culture of time. 11 Ibid. 12 Charles H. Traub "The Creative Interlocutors: A Creative Manifesto."Leonardo, Vol. 30, No 1 (MIT Press, 1997) 389-390. 13 Moholy-Nagy supported our notion of genius in his description of education at the new Bauhaus (Institute of Design) by structuring education there to place emphasis on "integration through a conscious search for relationships ¬ artistic, scientific, technical as well as social. The intuitive working mechanics of the genius give a clue to this process. The unique ability of the genius can be approximated by everyone". From New Education, p. 9. See also Minsky's reference to Papert's principle. 14 Steven Levy, "Wisecrackers." Wired Magazine (March 1996): 128. 15 MIPS is short for millions of instructions per second, a measure of computational power. A MIPS year is the amount of computing power produced in a year by a computer capable of a million instructions per second.